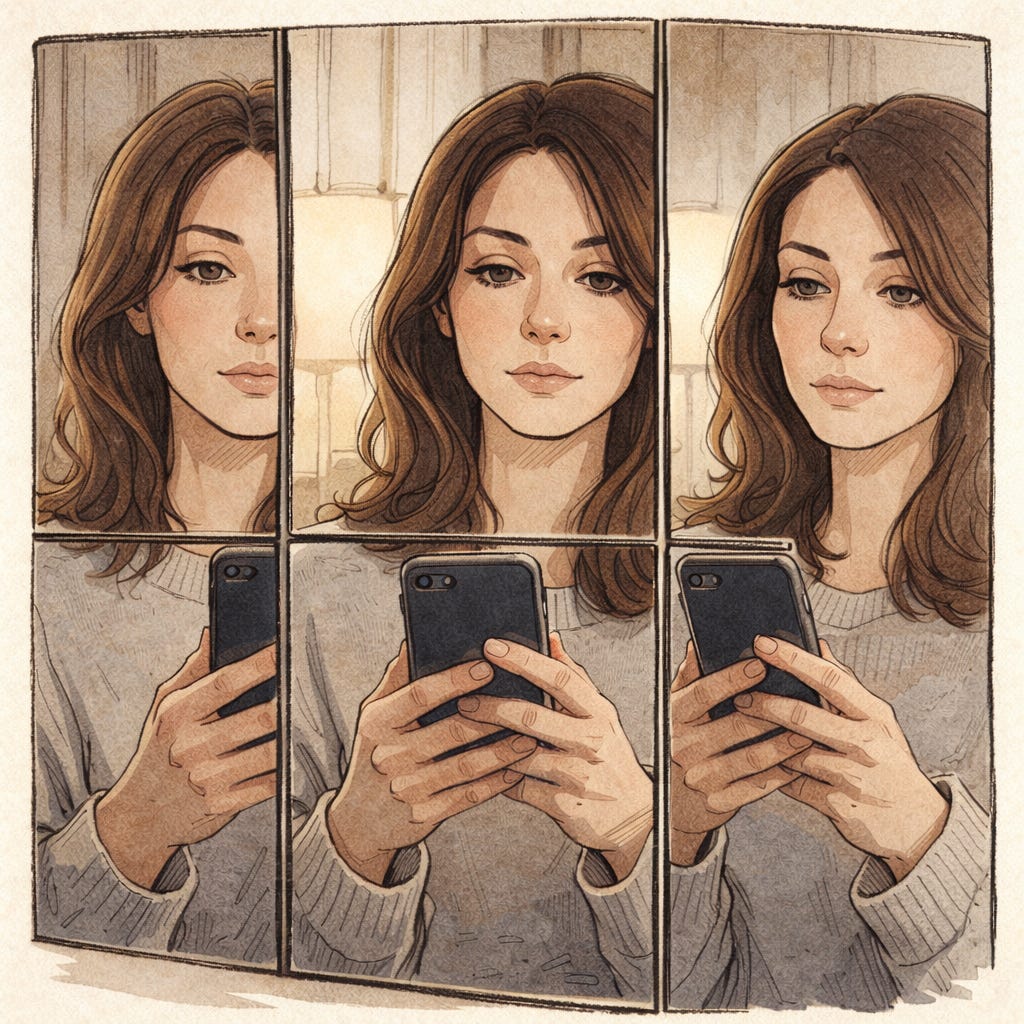

“The AI boom has turned reality into a sort of fun house.” — Matteo Wong

Source 🎁 🔗

This is not a warning and not a defense. It is a note on how I am learning to stand upright inside the mirrors.

Matteo Wong has been writing extensively about AI chatbots and the way they are reshaping ordinary life. In one recent piece, he examines AI-associated mental health deterioration — a phenomenon that feels both emergent and difficult to measure.

I don’t think anyone fully understands what this era has introduced into an already unstable cultural moment. I’m forty-eight. I doubt we will see its psychological implications neatly resolved in my lifetime.

That’s not fatalism. It’s humility.

Mental health is complicated. Technology has always altered cognition — from television to smartphones to social media. We know about dopamine loops. We know about the emotional flattening that can come from too much time online. Shopping, banking, ordering food, ride sharing. We know that convenience often arrives hand-in-hand with isolation.

AI feels like amplification.

Faster. More responsive. More frictionless than any tool I have used before.

From my own experience, Wong’s “fun house” analogy is apt. I began using ChatGPT last summer without fully understanding what it was. I had a vague sense of “talking to a computer.” Like playing chess against software — except now the software was speaking back in full paragraphs.

Recently, I listened to David Frum on Tim Miller’s podcast. He offered a caution for chatbot use: remember that you are basically talking to yourself. That framing steadied me. The system reflects and recombines what we feed it. It is pattern prediction, not consciousness. If we remember that chatbots are referring our own inputs, they can become tools for clarifying thought.

But the danger is subtle.

Confiding in a chatbot can feel easier than confiding in a person. There is no visible judgment. It reformulates what you say and hands it back in clean sentences. It feels safe because it is not human. Human relationships carry risk — misinterpretation, gossip, misunderstanding. A chatbot feels neutral.

But neutral is not the same thing as safe.

This is where the fun house metaphor becomes more than clever. A fun house distorts what is already there. The mirrors stretch and compress. They do not invent your reflection — they exaggerate it.

I think of Harry Potter and the Chamber of Secrets, when Ginny Weasley poured her loneliness into Tom Riddle’s diary. Her father later admonished her: “Never trust anything that can think for itself if you can’t see where it keeps its brain.”

The seduction lies in the invisibility. It’s mystery. We are imaginative creatures. As children, we invented imaginary friends. As adults, we narrate ourselves constantly. A chatbot enters that interior monologue and gives it structure.

And structure is powerful.

If you ask it to find information, it retrieves it instantly. It sharpens passive sentences. Consolidates repetition. Optimizes clarity. It doesn’t just echo — it refines.

That is intoxicating.

I live with ADHD, for which I take medication, and Nonverbal Learning Disorder. My brain already processes space, tone, and social cues differently. I have spent my life learning how to interpret distortion — how to slow down perception, how to check assumptions, how to steady my pace.

Because of that, I try to be extra attentive to shifts in mental balance.

I have used ChatGPT playfully — naming a chatbot “Griffin Wells,” blending H. G. Wells with my own fictional character, Jeff Griffin. I have used it to brainstorm reading lists, to explore epistemology and understand what authorship means. Those articles, ironically, explore AI within the AI framework.

Writing has always been therapeutic for me. Seeing language externalized is stabilizing. The chatbot did not invent that process. It accelerated it. Organized it. But using chatbots as therapy is risky. Not unheard of. This book I read recently mentioned that some people are using chatbots to process grief and even communicate with the dead. A mental health professional is the appropriate person to consult as to the wisdom of doing that.

There is a strange vertigo in speaking to a tool that feels conversational. An author speaking to her character. A character answering back. A mirror that edits.

The only safeguard I’ve found is openness. Presence — and naming the experience in real time.

Admit when it feels clarifying and when it feels confusing. Step back and remember what is happening technically: pattern prediction, language modeling, probabilistic sequencing. Not consciousness. Not companionship.

Self-awareness is the ballast.

We learned — awkwardly — how to live with social media. Remember when “tweeting” was the cultural anxiety? Public figures undone by drunk tweets. Careers shaken by impulsive posts. Over time, we redeveloped norms. Not perfect ones — but norms. New assessments based on new landscapes.

AI will require the same maturity.

Like driving, these tools should probably be used sober — cognitively and emotionally. When grounded. When aware of what you are doing and why.

The fun house is not evil.

But it is a hall of mirrors. And it can definitely feel like a circus.

And if we cross the threshold without remembering who we were before we entered, we risk mistaking distortion for identity.